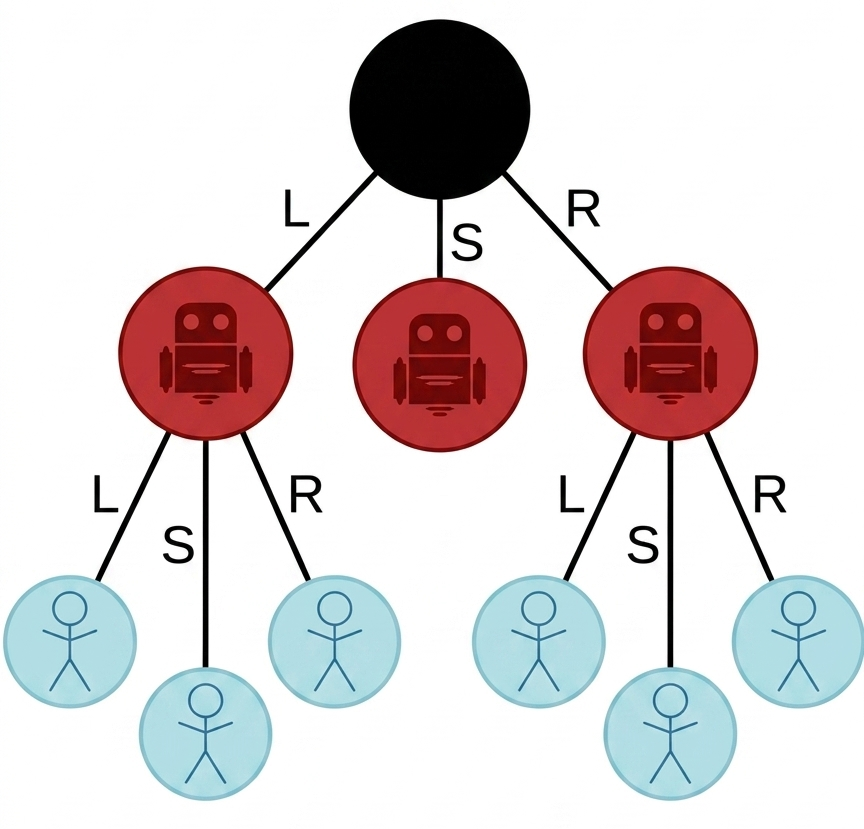

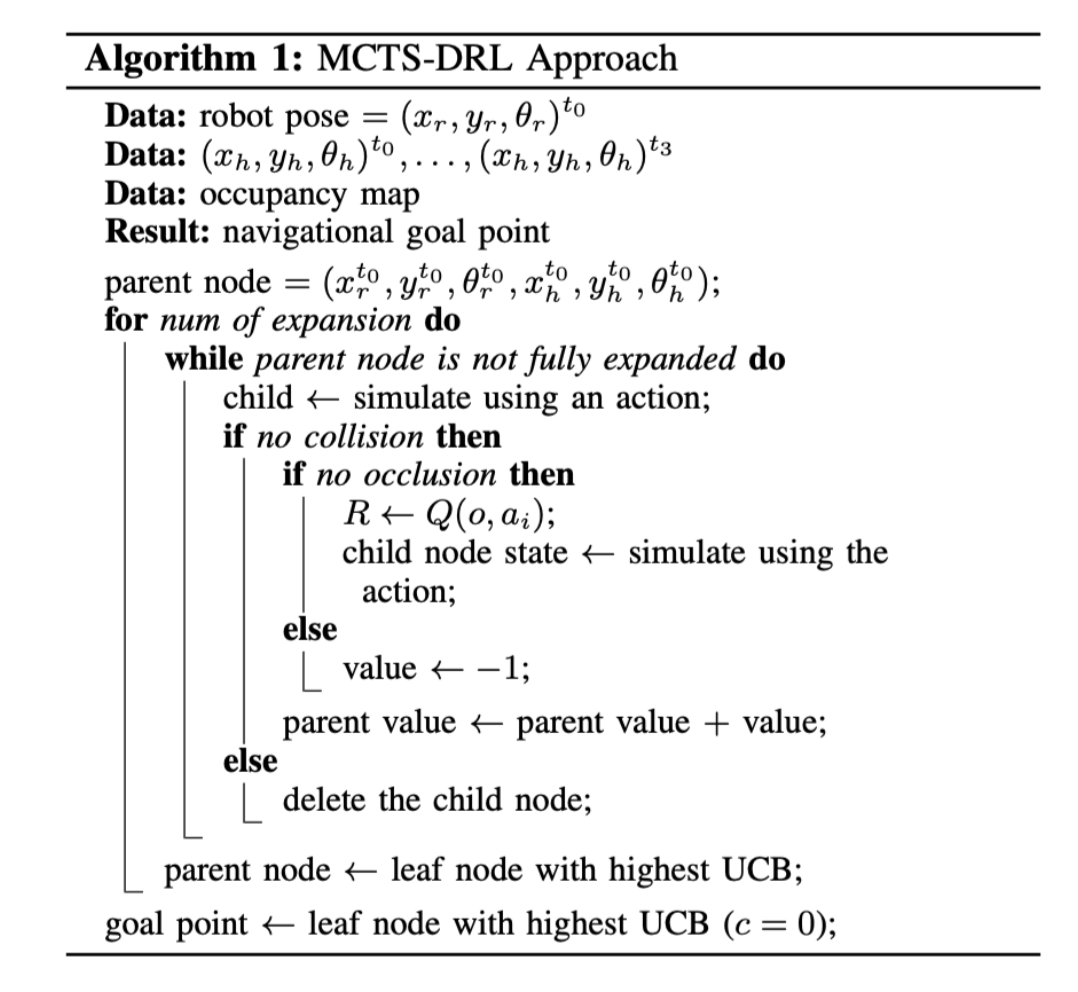

We replicate the MCTS-DRL framework of Leisi azar et al. for robotic follow-ahead navigation. The method integrates Monte Carlo Tree Search with a pretrained RL value function, using learned value estimates in place of random rollouts to generate consistent short-term navigational goals, with an LSTM-based human action predictor biasing tree expansion toward more probable human futures. We re-implement the full framework from scratch in ROS2, including the MCTS planner, RL value function, and LSTM predictor, and deploy the system on a QBot2e using VICON for pose estimation and Cartographer for mapping. Experiments on a range of trajectories probe follow ahead behavior under controlled conditions.